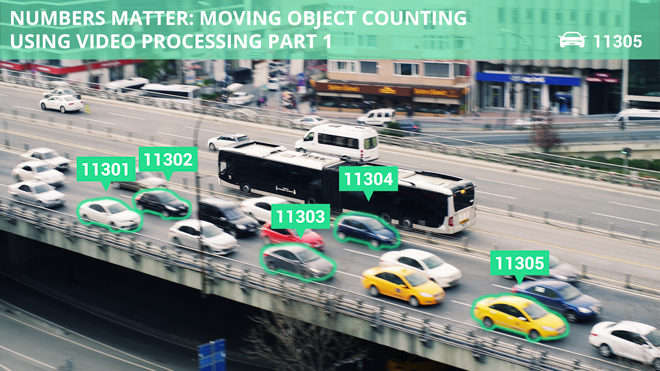

Numbers Matter – Moving Object Counting Using Image and Video Processing – Part 2

This is part 2 of our research on counting moving objects using video/image processing that will cover detecting moving object direction in particular.

The increasingly technologized world today demands totally efficient and consumer-friendly solutions.

We collected real-time video data and applied the method of slit-imaging for counting objects. The obtained results show high accuracy and together with affordability, it proves to be a successful algorithm for real-world usage.

In the first part of this study, we showed examples of how the objects were counted by means of slit images generated using a single line as a region of interest.

In the following section, we take up and specifically discuss moving objects’ direction detection. Identification of moving objects in video processing plays an important role in everyday life. It is crucial for traffic control or video surveillance of various environments. There are multiple methods of motion detection but they engage in installing complex and expensive equipment, and elaborate monitoring systems which are tedious and resource-consuming.

The purpose of the present article is to detect and examine the direction of moving objects (cars in our case) in the video.

Algorithms to Detect Direction of Moving Objects

In order to identify the direction of the moving object, two parallel lines are needed. The direction is distinguished depending on which line an object touches first. Initially, a video frame is taken and one more line but with a little shift to the first one is drawn.

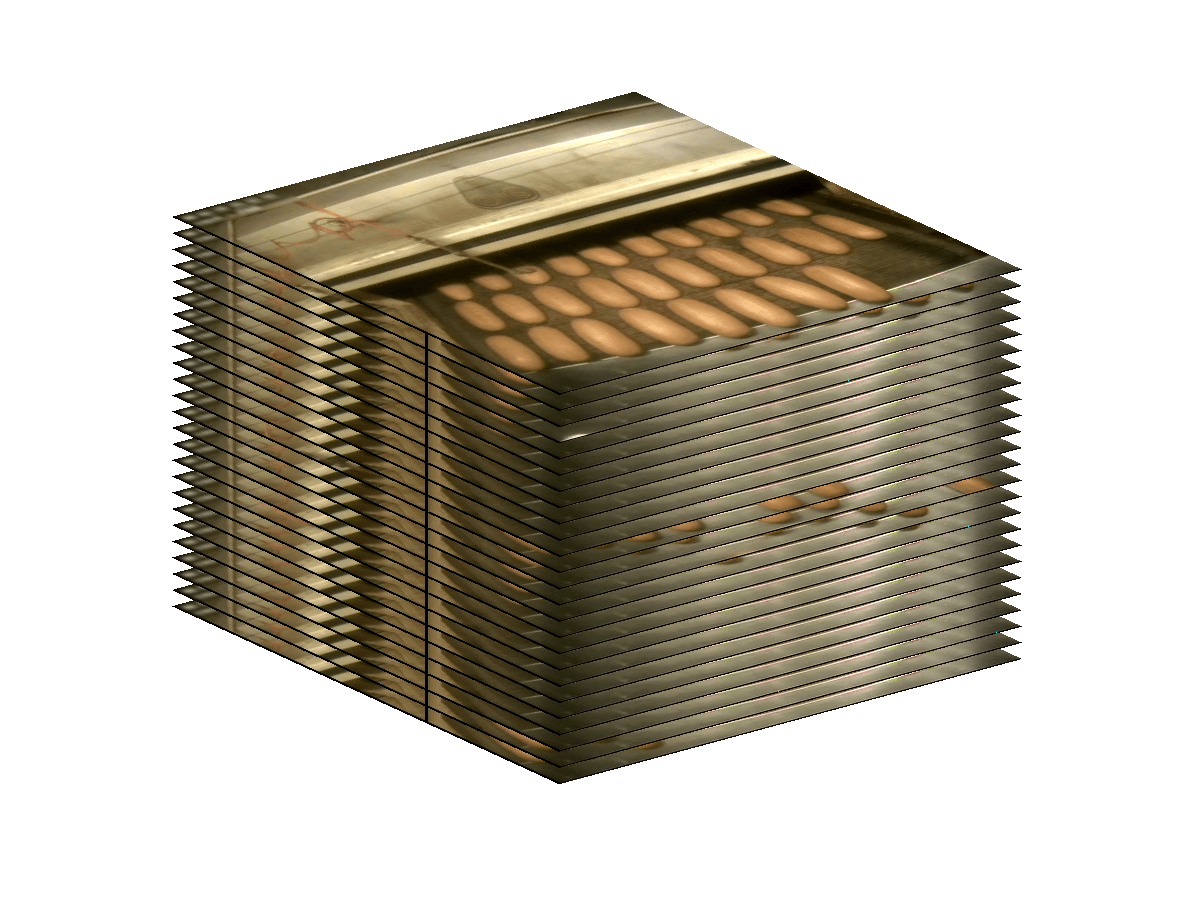

So, 2 lines of pixels out of the frame are taken and 2 slit images are formed consequently: the first one from line No 1 and the second one from line No 2. The idea is that the basic difference between 2 slit images is in motion direction for every object. Each car will create a blob in every 2 slit images. They will be mutually shifted depending on the direction.

First, the coinciding pairs of blobs from both slit images are found. If a blob for some reason isn’t paired, it is not taken into account. This brings up a question about clear blob cohesion from both masks. Some mistakes in the morphological processing can lead to the discrepancy, that is:

- 2 neighboring blobs representing 2 moving objects fused together on a certain mask

- a blob of a moving object split into multiple blobs on a certain mask

- a blob was removed from one of the masks but remained on another

It caused the situation when, due to the differences between slit images, we received one blob on a mask and several blobs in the same area on another mask. We used the following algorithm to eliminate this.

Primarily, all blobs are sorted out one by one on the first mask. Every single one is layered on the second mask. When there appear points belonging to more than one blob or there are no points present on the second mask such blob from the first mask is removed.

Then the second round of the algorithm with the masks in reverse order is performed. Further, we proceed to actual destination measuring. For this purpose, the centroid (coordinates of a center) of every blob is estimated and compared with that of its companion. Only vertical components of the centroid are taken. So, if the y-component of a centroid of the first blob is smaller (i.e. higher) than that of the second blob, it means that the moving object initially touched the first line, that is, it moved from right to left (in this particular case). We cannot define directions in absolute values; they are defined in correlation with the lines.

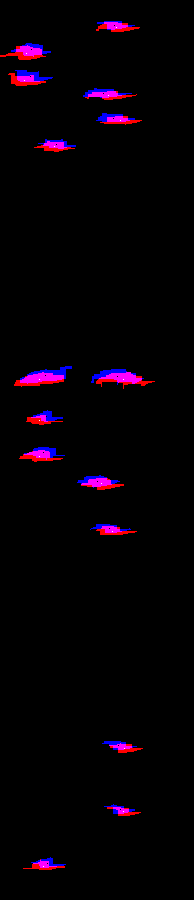

Our next step was overlaying two masks in order to show slit image masks simultaneously. Blobs on the first mask are colored blue and those on the second one are colored red. Thus the shift is clearly visible.

In the above example, all blue blobs are depicted higher than the red ones, hence all cars were moving in one direction. In case the car is moving in the opposite direction, the blue blob will be located beneath the red one.

One more vivid example is the video clip recorded in the office, after processing it, the following graphic results were obtained:

Interpretation: 1st stripe – slit image; 2nd stripe – filtered mask of the slit image; 3rd – two overlaid masks of slit images.

This shows that people were heading in different directions. The algorithm detected 23 objects, 12 of them were moving one way and 11 in the opposite.

Summary

The algorithm applied by Abto Software proves its efficiency in terms of resources- and time-saving. It helps users make the most of their data by analyzing and providing a valuable and easily applicable outcome.

Low computational cost, simple to operate, with an accuracy level of 90 %, flexible and versatile, the approach is easily implemented and does not demand any additional equipment. This universal method opens one more window of opportunity for various business domains, mass production, and social spheres. Interested in how it can be applied in your case, contact our team of experts.